High Dynamic Range Images

August 6, 2006

There's a lot of interest on the web in high dynamic range (HDR) images. There are two ideas involved. The first is to make an image that can respresent detail in very dark and very bright spots at the same time. Consumer cameras can't do this, so instead the photographer takes a sequence of images of the same scene but with different shutter speeds. These are combined to create a single image with, for example, 32 bits per component, or a float per component. Photoshop CS2 and a program called Photomatrix can both do this. Once you have this image you can do all sorts of extreme manipulation, like contrast enhancements, without losing color precision.

The second idea is to then compress this HDR image into a normal 8-bit image, keeping the parts of the image that have color precision. I was only interested in this aspect, so I wrote a program that goes straight from a set of bracketed images to a final image without generating the intermediate high dynamic range image.

In the first image below the building looks fine but the sky is blown out.

I took another image of the same building but with my brightness setting turned down. (This was a lower-end camera where I couldn't control the shutter speed, but the effect is more or less the same.) I didn't have a tripod, so the pictures didn't align perfectly. I used Photoshop to rotate the darker picture to match the brighter one.

My program takes both pictures above and generates the one below. It can take any number of images, but in this case I only had two. Three works well if some of the scene is in the middle tones.

In the real scene the sky was much brighter than the building (as captured by the camera in both images above). The eye is able to vary its contrast over time, to adjust for when you go from a dark room into the bright outside day. But it can also adjust over the retina, so that the bright sky looks more or less as bright as the building. What I saw when I took those pictures matches that last picture.

You'll notice the glow around the building. That's because the algorithm tries to find areas of low and high brightness, and those areas bleed into one another, hence the halo. I tried various things to fix that but they only resulted in other, worse artifacts. Some of the pictures I saw from the Photoshop algorithm also have a halo, so this may be an inherent problem. Perhaps the eye even has this problem! It'd be hard to tell, your mind would surely compensate for it.

The algorithm is as follows:

- For each image, generate a grayscale mask with values

between 0 and 255 this way:

- For each input pixel, calculate the mean: (r + g + b)/3

- Map values 0 to 127 to masks 0 to 255. Map values 128 to 255 to masks 255 to 0.

- Blur the masks. I used a 100-pixel radius blur on a 6 megapixel image, or about 5% of the image height. The blur is used to keep local contrast. For example, we don't want the people's white shirts in the above images to come from the dark image just because they're close to white. Without the blur the image has a washed-out look.

- Blend the images as a weighted sum of their masks, pixel-wise.

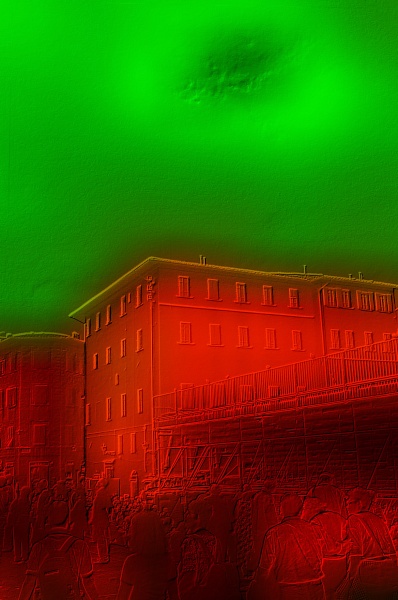

The masks for the above pictures look like this:

I've combined both masks into a single image where the red component is the mask of the brighter image and the green component is the mask of the darker image. Here's the mask again but with an outline of the original overlayed for reference:

Note how the blur causes the brighter image's sky to be used right around the building, causing the halo. Also, each mask has variations, like the dark hole in the sky, but that doesn't matter as long as (in the case of the sky) the green mask component is much higher than the red one. The bulk of the final picture will still come from the darker image.

Technical notes: The blending is done in gamma space, not in linear space. This isn't correct, but I tried linear space and the glow was far worse (and nothing else was better). Perhaps I should do the math in a gamma space higher than 2.2! Also, above I specify the “mean” of red, green, and blue. I tried using the correct luminosity function (0.299*r + 0.587*g + 0.114*b) but this also made the glow worse without improving anything else. It might be fun to manually create the “perfect” image in Photoshop (by cropping the sky) and then loop over a set of parameters (blending space, mean function, blur radius, mask map) to see which work best for this particular picture.

Thanks to Drew Olbrich for some of the ideas in the algorithm.